The challenges of releasing quality at speed keeps on growing. Digital Transformation requires faster time to market and keeps us in a constant race to push out the changes before the competitors and provide our customers new features. We’re no longer in the market of selling off-the-shelf products and updating the software once every few months. Software is either transferred digitally, embedded in the products and being updated online, or being provided as services.

To stay ahead in the digital transformation continuous race, you need a flexible approach that can quickly adjust to the constant needs of the changing market. Most companies have moved to agile approach, in order to move faster and release more often. The industry standard nowadays is having smaller teams in charge of smaller services in cross-functional groups.

As the teams are handling components which are split up into smaller groups, it makes it harder to understand how one service impacts the other, which service is using the code that’s being modified, and are they tested sufficiently in the right places to try and maintain high quality.

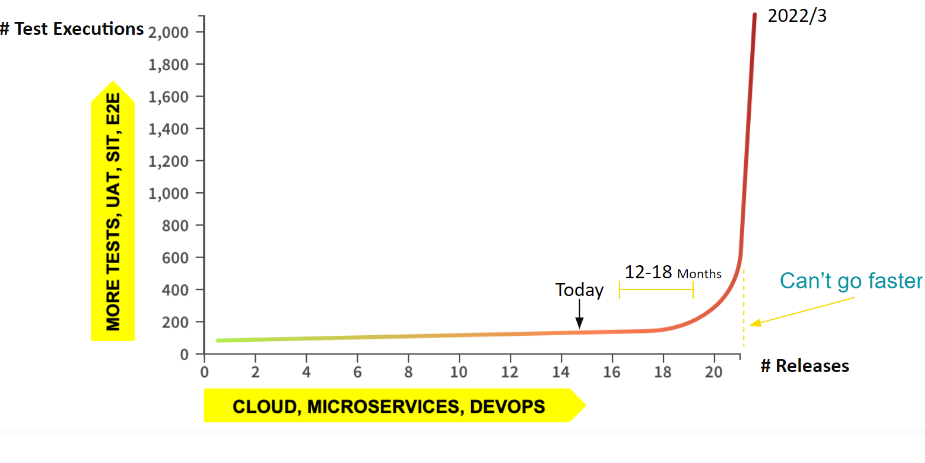

So we are writing more and more tests on various levels. We write Unit Test, to test each different part of the code. We also write End-to-End tests, to check that the applications behave as expected and UI tests to check that the users could use the applications properly. These are expensive tests that require resources, both to write and run them.

What this means is that soon we will reach a point where we just cannot go any faster. The amount of tests keep multiplying and so is the time it takes to run them. It’s holding back development cycles, which are waiting for the results to understand what’s not working and needs to be fixed.

The only option would be to start removing tests, but as teams don’t really have an understanding of what the tests are actually hitting, they are worried that if they remove any of them, then something will be missed, and they’ll get more production issues.

Continuing on this path without doing anything means that we’re going to hit that roadblock, which in turn will impact the quality of our product. What happens typically, is even though they are reluctant to do so, teams start cutting tests based on guesswork or seaetlling for only Unit Tests, because they are instructed to or just can’t run them all. Some managers or teams convince themselves that Unit and Component tests are good enough and they get too much attention just because they’re faster, but they’re losing out on quality because they’re running the lower quality tests.

Software needs to be tested properly. Adding tests blindly without really knowing where you’re adding them, and running all the tests you have is not the answer. It doesn’t fit the demand that we have to get everything out as fast as possible. We need to dramatically reduce the required number of tests, execute only the ones needed using smart test selection, and achieve high quality by identifying & blocking untested code changes from reaching production.

So how can we do this? We need a smart way to identify which tests actually need to be executed, thus reducing the number of tests run and also where to add the minimum amount of new tests targeting the high-risk areas.

I’d like to concentrate here on how we reduce the number of tests.

Back in 2017, Martin Fowler, a chief scientist at ThoughtWorks wrote:

“Test Impact Analysis (TIA) is a modern way of speeding up the test automation phase of a build. It works by analyzing the call-graph of the source code to work out which tests should be run after a change to production code. Microsoft has done some extensive work on this approach, but it’s also possible for development teams to implement something useful quite cheaply”

So Test Impact Analysis (TIA) is having a way to understand where the tests are actually hitting the code. And then using that knowledge to decide which tests should be run/skipped based on the code changes themselves.

Here are some of the challenges TIA helps us handle by reducing the number of tests that are run:

- As developers make their code changes, which kicks off a CI/CD process, the time it takes their code to be built, deployed and tested will take a long time, and they move on to something else in the meantime. When they finally get the feedback, they need to switch back, and all this context switching back and forth wastes time, causes frustration, and eventually leads to lower-quality code. So by running the minimum amount of tests, we’re reducing those context switches and giving developers feedback in a timeframe that allows them to handle issues more efficiently.

- As Regression tests take a long time, they are usually not part of the standard CI/CD process, and will usually run nightly or even less often. Test Impact Analysis allows you to run a partial regression cycle as part of the CI/CD process and provide this feedback to your dev teams once again before context switching to another subject. Of course, you can still run the full regression once a night or when you need to get the full picture of the regression cycles

- For hotfixes time is of the essence. You need to get them out as quickly as possible. But how do you know which regression tests to run? Either they are not run at all or guesswork is involved to try and hope you test what should have been run.

Working with Test Impact Analysis, you know which tests you need to run to maximize test coverage and release hotfixes with more confidence. - Next challenge is related to cost. Running so many tests requires a large amount of resources and complex CI to orchestrate it all. You’re running all these tests, on multiple machines, in multiple test environments to try and run the tests in parallel. All this hardware costs a lot of money to keep running. You’re also investing time in writing and maintaining the complex CI, which requires resources to do so which also have a high cost. There are of course automation services that will do a lot of this for you, but they too are not cheap. So, Test Impact Analysis allows you to reduce CPU time, spin up less machines, cutting both expenses and time spent.

- One of the biggest cost factors is manual testing. Lots of companies still have some form of manual testing, either because their Automation tests are not mature enough, or because some areas are not cost worthy to automate. It can take hours or days to manually test certain things, so to reduce this time you hire a lot of manual testers to work in parallel, and you need to provide them with environments to test on. This can multiply your costs dramatically.

Test Impact Analysis uses AI to evaluate tests that have been previously executed so that manual testers know exactly which tests they need to run based on the code changes and therefore saving a lot of time and resources.

So how does SeaLights uses TIA?

Our system uses machine learning to understand what parts of the code each test tests. This is done in any testing stage like post-deploy Regressing Tests, and including even Manual Tests.

Each time a build starts, we then identify which code has been modified, based on the knowledge of which tests previously went through the code changes we provide feedback automatically into your testing framework, instructing it on which tests to skip, without the need to modifying your tests, or your code.

For manual testing, we provide feedback to the manual testers about which tests they need to perform.

Using SeaLights TIA tool you would executes the most relevant tests first, according to their risk priority and according to their failure history and skip ‘’green” tests. SeaLights support the Shift-Left and allows tests to run as early as possible. Using SeaLights TIA allows quick execution of the relevant unit tests and integration tests before merging code to master branch. It also support execution of a subset of black-box testing, such as component tests, functional tests, and end-to-end tests.

SeaLights TIA tool increase the test effectiveness by identifying and analyzing flaky tests, and identifying and removing redundant tests. It also displays the entire code to test / test to code map to help you run only the tests you need.