Agile testing methodology is an inseparable part of agile methodology. In agile, testing is just one aspect of the software development lifecycle. It runs continuously alongside the development effort, and is a collaborative effort between testers, developers, product owners and even customers.

In this page you will learn:

- What is agile testing

- How agile methodology impacts testing

- How to use four popular agile testing methods:

What is Agile Testing?

Agile testing is a core part of agile software development. Unlike in previous software methodologies, where testing was a separate stage that occurred after development was complete, in an agile methodology testing begins at the very start of the project, even before development has started. Agile testing is continuous testing, which goes hand in hand with development work and provides an ongoing feedback loop into the development process.

Another evolution in agile testing is that testers are no longer a separate organizational unit (there is no “QA department”). Testers are now part of the agile development team. In many cases, agile organizations don’t have dedicated “testers” or “QA engineers”; instead, everyone on the team is responsible for testing. In other cases, there are test specialists, but they work closely with developers throughout the software development cycle.

How Agile Methodology Impacts Testing

The agile manifesto, the cornerstone of agile methodology, has several points that are especially relevant for testers:

| Agile Manifesto Directive | Implication for Testing |

| Individuals and interactions over processes and tools | Testers should work closely with developers, product owners, and customers to understand what is being developed, who it is for, and what will make it successful. |

| Working software over comprehensive documentation | Testers in the agile world do not have a book of requirements they can test against. They need to carefully define and refine acceptance criteria together with their teams and test against those criteria. |

| Responding to change over following a plan | Agile testers must prioritize and re-prioritize their tests, focusing on what will help the team reach its goal, minimize risk and keep customers happy. |

| Collaborating with customers over contract negotiation | Whether they have direct contact with customers or not, testers in an agile environment should be focused on what matters for the customer – which can vary from product to product. Customers may focus on new features, stability, security or other requirements, depending on their needs and the product’s maturity. |

4 Agile Testing Methods

1. Behavior Driven Development (BDD)

BDD encourages communication between project stakeholders so all members understand each feature, prior to the development process. In BDD, testers, developers, and business analysts create “scenarios”, which facilitate example-focused communication.

Scenarios are written in a specific format, the Gherkin Given/When/Then syntax. They contain information on how a feature behaves in different situations with varying input parameters. These are known as “executable specifications” as they are made up of both specifications and inputs to the automated tests.

The idea of BDD is that the team creates scenarios, builds tests around those scenarios which initially fail, and then builds the software functionality that makes the scenarios pass. It is different from traditional Test Driven Development (TDD) in that complete software functionality is tested, not just individual components.

Testing Methods: Behavior Driven Development (BDD)

Best practices for testers using a BDD methodology:

- Streamline documentation to ensure the process is efficient

- Embrace a “three amigos” approach, where the developer, product owner, and tester work together to define scenarios and tests

- Use a declarative test framework, such as Cucumber, to specify criteria

- Build automated tests and reuse them across scenarios

- Have business analysts write test cases and learn the Gherkin syntax

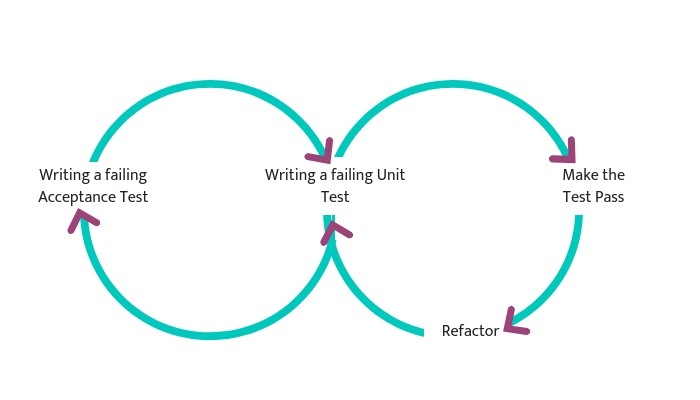

2. Acceptance Test Driven Development (ATDD)

ATDD involves the customer, developer, and tester. “Three Amigos” meetings are held to gather input from these three roles, and use them to define acceptance tests. The customer focuses on the problem, the developer pays attention to how the problem will be solved, and the tester looks at what could go wrong.

The acceptance tests represent a user’s perspective, and specify how the system will function. They also ensure that the system functions as intended. Acceptance tests can often be automated. Like in the BDD approach, acceptance tests are written first, they initially fail, and then software functionality is built around the tests until they pass.

Testing Methods Acceptance Test Driven Development (ATDD)

Best practices for testers using an ATDD methodology include:

- Interact directly with customers to align expectations, for example, through focus groups

- Involve customer-facing team members to understand customer needs, including customer service agents, sales representatives, and account managers

- Develop acceptance criteria according to customer expectations

- Prioritize two questions: How should we validate that the system performs a certain function? Will customers want to use the system when it has this function?

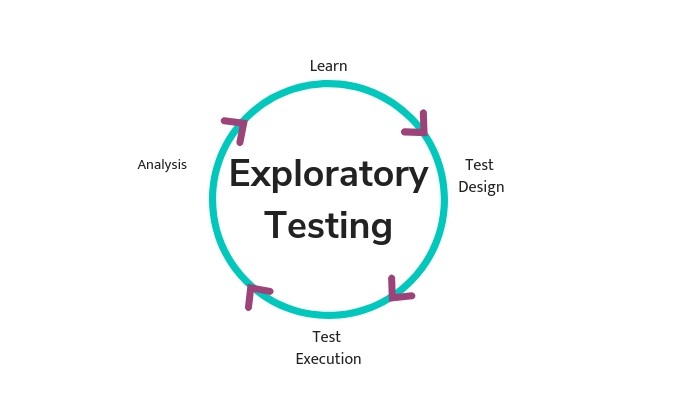

3. Exploratory Testing

In exploratory testing, the test execution and the test design phase go together. This type of testing focuses on interacting with working software rather than separately planning, building and running tests.

Exploratory testing lets testers “play with” the software in a chaotic way. Exploratory testing is not scripted – testers mimic possible user behaviors and get creative, trying to find actions or edge cases that will break the software. Testers do not document the exact process in which they tested the software, but when they find a defect, they document it as usual.

Best practices for exploratory testing:

- Organize functionality in the application, using a spreadsheet, mind map etc.

- Even though there is no detailed documentation of how tests were conducted, track which software areas were or were not covered with exploratory testing

- Focus on areas and scenarios in the software which are at high risk or have high value for users

- Ensure testers document their results so they can be accountable for areas of software they tested

4. Session-Based Testing

This method is similar to exploratory testing, but is more orderly, aiming to ensure the software is tested comprehensively. It adds test charters, which helps testers know what to test, and test reports which allow testers to document what they discover during a test. Tests are conducted during time-boxed sessions.

Each session ends with a face-to-face brief between tester(s) and either the developers responsible, scrum master or manager, covering the five PROOF points:

- What was done in the test (Past)

- What the tester discovered or achieved (Results)

- Any problems that got in the way (Obstacles)

- Remaining areas to be tested (Outlook)

- How the tester feels about the areas of the product they tested (Feelings).

Best practices for session-based testing include:

- Define a goal so testers are clear about priorities of testing in the current sprint

- Develop a charter that states areas of the software to test, when the session will occur and for how long, which testers will conduct the session, etc.

- Run uninterrupted testing sessions with a fixed, predefined length

- Document activities, notes, and also takeaways from the face-to-face brief in a session report

How to Focus Agile Testing Efforts with Quality Intelligence

A central tenet of agile testing is that testing must be prioritized and focus on the user. You can’t test everything – so you must focus on the things that users care about. However, beyond gut feelings, most teams do not have data to point them to the features or areas of the product that can have the biggest impact on their customers.

A new category of tools, called Quality Intelligence Platforms, can provide this data, allowing teams to sharply focus their work on customer-facing quality issues.

For example, SeaLights is a platform that collects data about test execution at all testing levels – unit testing, integration and acceptance testing. It also tracks code changes and production usage of the product. It analyzes the data and identified “testing gaps”: areas of the product which have recently changed, are used in production, but are not adequately tested.

Test gaps are exactly where agile teams should be focusing testing efforts. Instead of over-testing, or reacting to previous production faults, they can target areas of the product which are at high risk of quality issues. These are the areas that customers will care about the most.

For more practical advice on how to get better data to prioritize your testing, learn more about Quality Intelligence.